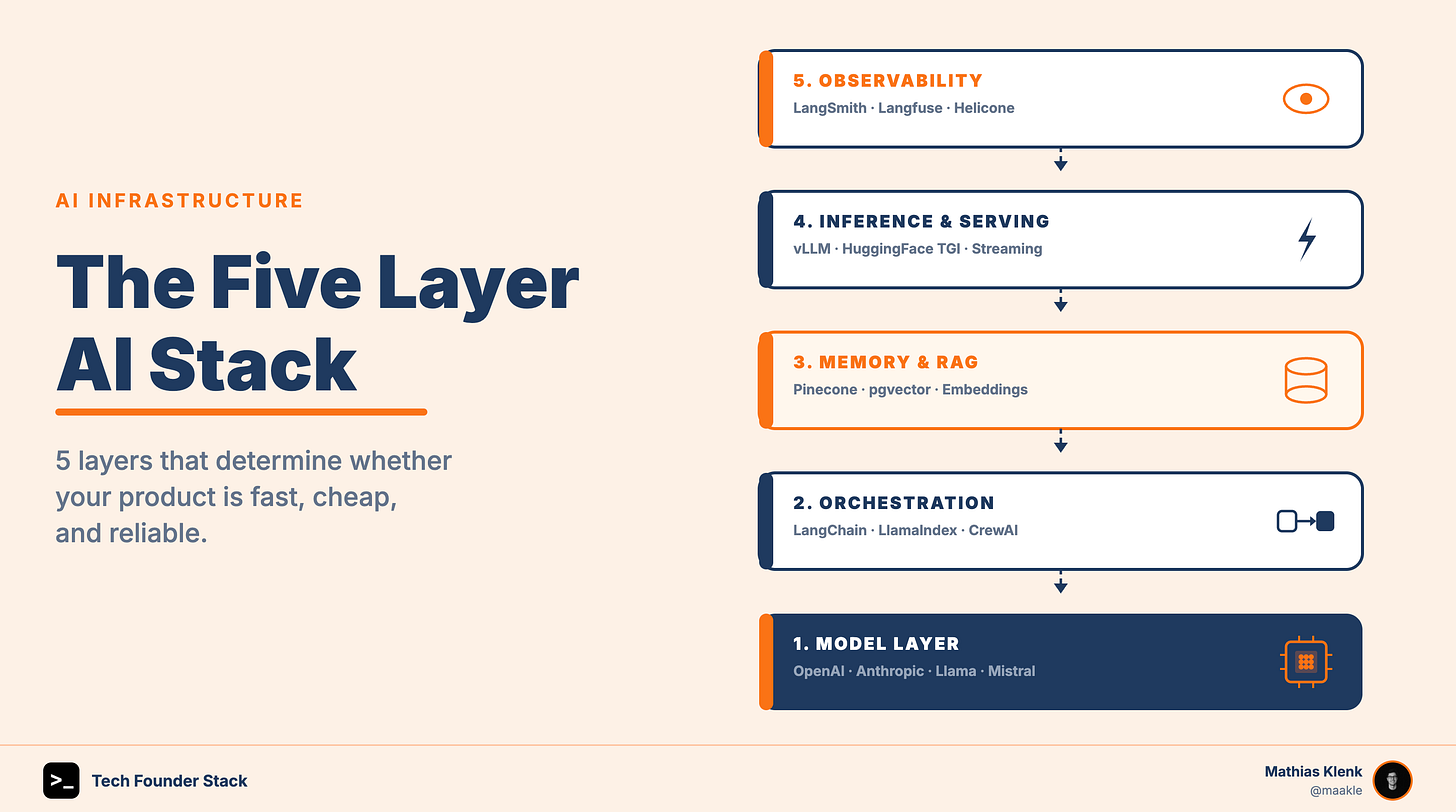

The Minimum AI Stack Every Technical Founder Should Understand

The five layers that determine whether your AI product is fast, cheap, and reliable.

There is a particular kind of founder anxiety making the rounds right now. It sounds like this: “I don’t have a machine learning background. Am I already behind?”

The short answer is no. But there is a catch.

You do not need to understand backpropagation or be able to fine-tune a transformer from scratch. What you absolutely must understand is the architecture that sits between a model and your users. The decisions you make about that architecture will determine your cost structure, your latency profile, your reliability guarantees, and ultimately whether your product survives contact with scale.

This is the minimum AI stack every technical founder needs to understand. Not to build it from scratch, but to make better decisions about it.

Why This Matters More Than the Model Itself

Most founders fixate on the model. GPT-4o vs. Claude vs. Gemini, as if model selection is the primary architectural decision. It is not. Models are commoditizing faster than anyone predicted. What a competitor cannot easily copy is the infrastructure reasoning you build around the model: your caching strategy, your retrieval approach, your fallback logic, your cost controls.

The technical founders who are winning right now are not the ones who trained a better model. They are the ones who built a faster, cheaper, more reliable product on top of the same models everyone else has access to. That is a stack problem, not a model problem.

The Five Layers You Must Internalize

1. The Model Layer (API vs. Self-Hosted)

At the top of the stack sits the model itself. The practical choice in 2025 is almost always between calling an API (OpenAI, Anthropic, Google, Cohere) and self-hosting an open-source model (Llama, Mistral, Qwen).

The build vs. buy tension here is real and consequential. API providers give you state-of-the-art capability with zero infrastructure burden, but you are paying a per-token tax on every request, accepting their latency, and depending on their uptime. Self-hosted models give you cost control and data sovereignty, but require you to manage inference infrastructure, and that is genuinely not trivial.

The decision rule is simple: use APIs until your token costs become a meaningful line item in your P&L or until your data privacy requirements force the issue. Then evaluate self-hosting at the margin.

What you need to understand here is how tokens are priced, what context window size costs you, and how model capability differences actually map to your use case. Flagship models are rarely 10x better, but they are often 10x more expensive.

2. The Orchestration Layer

This is where most of the product logic lives, and where most founders underestimate complexity. Orchestration covers everything that happens between receiving a user request and sending it to the model: prompt assembly, conversation history management, tool calling, multi-step reasoning chains, and routing between models.

Frameworks like LangChain, LlamaIndex, and the emerging wave of agent frameworks (AutoGen, CrewAI) abstract a lot of this. But abstraction has a cost. These frameworks add latency, introduce failure modes, and can obscure what is actually happening in your pipeline. A lot of production teams have quietly started writing thin, custom orchestration rather than depending on a heavy framework.

What you need to understand is the difference between a single inference call and a multi-step agentic chain. The latter can multiply your latency and cost by 5 to 10x while introducing compounding failure probability at each step.

3. The Memory and Retrieval Layer (RAG)

Pure in-context prompting has a hard ceiling: the context window. Retrieval-Augmented Generation solves this by storing knowledge externally and retrieving relevant chunks at query time. If your product involves large knowledge bases, long documents, or personalized user history, you are almost certainly building some form of RAG.

The components are a vector database (Pinecone, Weaviate, Chroma, pgvector) and an embedding model (OpenAI text-embedding, Cohere Embed, or open-source alternatives). The architectural decisions around chunking strategy, embedding model quality, similarity search parameters, and reranking have an outsized impact on both answer quality and cost.

What you need to understand is that retrieval quality directly constrains generation quality. A retrieval system that surfaces the wrong context will produce wrong answers regardless of how capable your model is. Garbage in, garbage out still applies.

4. The Inference and Serving Layer

If you are self-hosting models, this layer becomes critical fast. Tools like vLLM and Hugging Face’s TGI handle the problem of serving large models efficiently by managing memory, batching concurrent requests, and delivering consistent throughput. Without optimized inference, a 70B-parameter model serving 100 concurrent users will grind to a halt.

Even if you are API-only today, you should understand this layer conceptually, because it governs the latency and throughput guarantees your provider can actually deliver. It also explains why streaming responses exist: delivering tokens progressively reduces perceived latency without actually speeding up total generation time.

What you need to understand is the difference between time-to-first-token, which is what the user feels, and total generation time, which determines throughput. Both matter, but they call for different optimizations.

5. The Observability Layer

This is the layer most early-stage teams skip and later regret. In traditional software, a 500 error is deterministic and reproducible. In AI systems, failures are probabilistic. A model might hallucinate on 3% of inputs, degrade in quality as context grows, or behave differently at the edges of your prompt templates.

Without observability, you are guessing. Tools like LangSmith, Langfuse, and Helicone let you trace individual requests, log inputs and outputs, monitor cost per session, and catch quality regressions before your users do.

What you need to understand is that you cannot optimize what you cannot measure. Setting up basic logging and tracing from day one costs you almost nothing. Not having it when something breaks quietly costs you a lot.

The Build vs. Buy Mental Model

Every layer in this stack presents a build vs. buy decision. The heuristic that has served the best founders well is to buy the undifferentiated infrastructure and build the differentiated logic.

Your retry logic, your fallback routing, your cost guardrails, there are vendors and open-source tools for all of this. The prompting strategy tuned to your specific use case, the retrieval pipeline that encodes your domain knowledge, the evaluation harness that catches regressions in your product quality, that is where you earn your moat.

The founders who build everything themselves in the early days are not being rigorous. They are being slow. The founders who buy everything and never understand the plumbing beneath it will be stuck when the vendor bill becomes existential or when they need to debug a systemic quality problem.

What “Understanding the Stack” Actually Means

You do not need to be an ML engineer. You do not need to write CUDA kernels or implement attention mechanisms.

You need to be able to answer these questions without reaching for Google:

Where does my token cost go, and what would halve it without sacrificing quality?

What is the latency budget of each step in my pipeline, and where is the bottleneck?

What happens to my product if my primary model API goes down for 20 minutes?

How do I know if my model’s answer quality is degrading over time?

If you can answer those, you have the minimum viable understanding of the AI stack. The rest is implementation detail, and implementation detail you can delegate.

The stack is not the moat. Your judgment about the stack is.

This is a great overview of the AI tech stack! The core technical decisions across these layers directly affect cost, performance, and reliability. You can make far more informed build‑vs‑buy decisions when you clearly understand the tradeoffs of each choice. I think going forward, most products will largely be model agnostic, taking advantage of different models' strengths, without being entirely dependent on any single one.